Executive summary

Sales AI in 2026 is not evaluated on dashboards or feature coverage.

The bottleneck is no longer data access, but decision speed.

Most teams see risk early, but act too late.

Effective systems reduce uncertainty fast enough to change outcomes.

This requires probabilistic models, automation, and market-level signals.

Only decision and orchestration engines are built for this.

Why traditional evaluation criteria no longer work

For most of the last decade, revenue teams evaluated sales platforms the same way they evaluated CRMs: by surface coverage.

The buying checklist was familiar. A single dashboard to centralize data. A long list of integrations. Configurable hierarchies to mirror the org chart. Forecast roll-ups that aligned with management layers. The assumption was simple: if everything was visible, leaders would make better decisions.

That logic no longer holds.

In 2026, the problem is not visibility. Most revenue teams already have more dashboards than they can operationalize. The problem is that those dashboards describe a world that is already outdated by the time it is reviewed.

Traditional criteria optimized for inspection, not intervention. They measure how well a tool aggregates internal signals, not whether it helps teams change outcomes while there is still time. A forecast that explains why the quarter will miss is no longer sufficient if it cannot influence what should happen next.

Three structural shifts made legacy evaluation criteria obsolete:

First, internal data is no longer enough. CRM history reflects past behavior inside a single company. It does not account for external market dynamics, buyer fatigue, competitive pressure, or macro shifts that affect every deal simultaneously.

Second, human judgment no longer scales. As data volume increases, asking managers to interpret dozens of signals manually creates latency, inconsistency, and bias. The bottleneck is no longer data access, it is decision capacity.

Third, automation expectations have changed. Modern revenue teams do not want more alerts or nudges. They expect systems to execute reliably without requiring perfect data hygiene or constant human intervention.

Evaluating sales AI based on UI quality, number of integrations, or dashboard flexibility misses the real question revenue leaders are trying to answer today:

Does this system help us make the right decisions early enough to change the outcome?

Everything else is secondary.

The criteria that actually matter in 2026

In 2026, evaluating sales AI is no longer about feature coverage. It’s about whether the system can reduce uncertainty fast enough to change revenue outcomes. Five criteria consistently separate legacy platforms from decision-grade systems.

Data Scope: Internal Signals vs Market Reality

Most tools still operate on a closed loop: CRM fields, activity logs, call transcripts. That data explains internal behavior, not external forces.

What matters now is whether a platform can contextualize deals against market-wide signals: buyer movement, sector slowdowns, role changes, competitive pressure.

A system trained only on your own history will always be late to reality.

Decision Capability: Insight vs Prescription

Surfacing risk is table stakes. The real differentiator is whether the system can recommend — or take — a concrete action.

Modern teams evaluate AI on its ability to answer: what should change today to alter this outcome?

If the output stops at insight, the human becomes the bottleneck again.

Automation Depth: Assistance vs Autonomy

Automation is no longer about logging activity or triggering reminders.

The question is whether the platform can execute reliably without perfect inputs: updating systems, adjusting priorities, initiating follow-ups, correcting data drift.

Shallow automation reduces effort. Deep automation reallocates capacity.

Learning Loop: Static Models vs Continuous Adaptation

Legacy systems learn slowly, if at all. Models are tuned on historical patterns and refreshed periodically.

Decision-grade platforms improve continuously by observing outcomes across deals, teams, and markets.

The tighter the learning loop, the faster the system adapts to changing buyer behavior.

Forecast Truthfulness: Confidence vs Probability

Most forecasts still reflect human optimism aggregated through process.

What revenue leaders increasingly demand is probabilistic truth: a daily, unbiased view of likelihood based on observable signals, not declared intent.

Accuracy is no longer about explaining variance after the fact. It’s about exposing risk early enough to act.

Taken together, these criteria shift evaluation from how well a platform visualizes the past to how effectively it influences the future.

This is the lens modern revenue teams use, whether vendors explicitly acknowledge it or not.

How revenue platforms actually cluster in practice

In 2026, most revenue platforms use similar language—“AI”, “intelligence”, “orchestration”—but operate on fundamentally different architectures. In practice, enterprise buyers encounter four distinct categories, each optimized for a specific layer of the revenue problem.

1. Forecast-first platforms (the process layer)

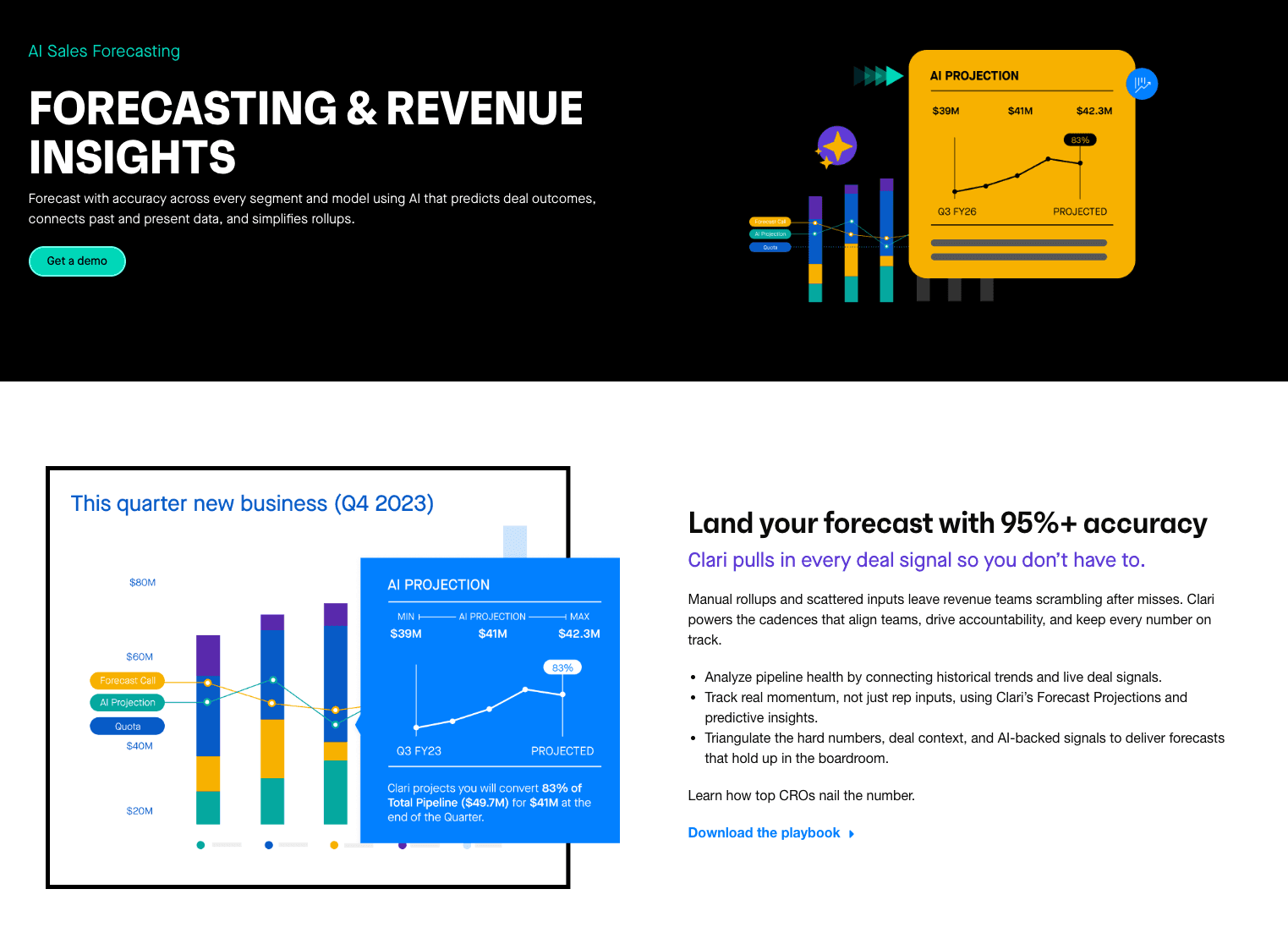

Primary Function: These systems digitize the weekly forecasting ritual. They replace spreadsheets with a purpose-built interface for submitting numbers, inspecting pipeline, and managing hierarchy roll-ups (Rep → Manager → VP → CRO).

Primary Data Source: Internal CRM snapshots (Time-series data). They take a picture of Salesforce or HubSpot data every 15 minutes to visualize changes over time.

Core Competency: Process rigor and executive visibility. They excel at answering "What changed since last week?" and enforcing discipline around deal stages.

Structural Limitations: Because they rely on internal historical data ("Single-Tenant" architecture), they are primarily descriptive: they visualize the gap to quota but rely on human managers to determine how to close it. As revenue complexity increases, these platforms tend to scale process discipline faster than decision quality.

Representative Examples: Clari, Salesforce Revenue Intelligence.

Forecast-first platforms focus on pipeline visibility and roll-ups, helping leaders track changes quarter over quarter, but still require human judgment to decide what to do next.

2. Conversation intelligence platforms (the context layer)

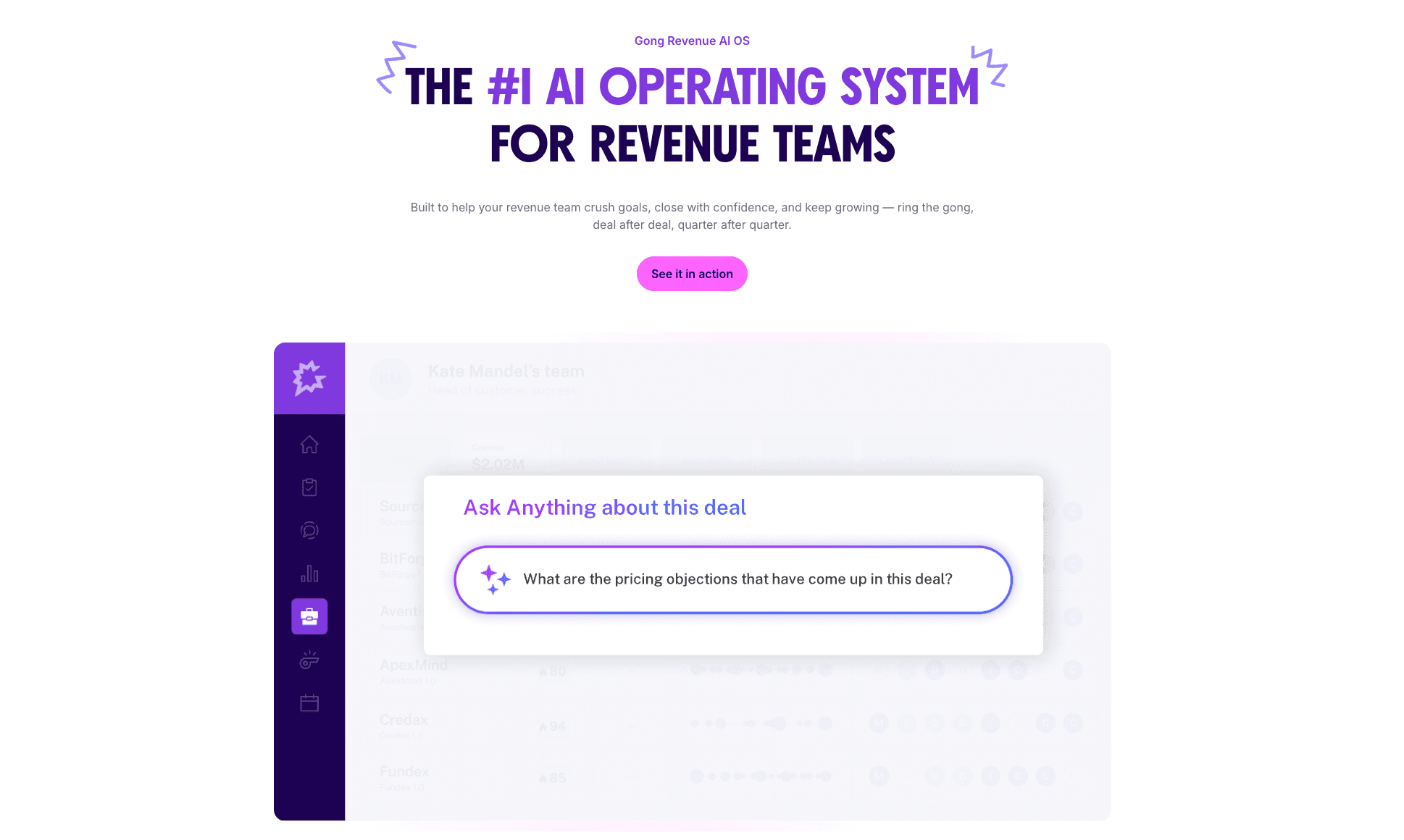

Primary Function: These tools capture unstructured data—voice and video calls—to provide a "game tape" for sales teams. Their original purpose was coaching and training, though many have expanded into deal inspection to validate CRM reality.

Primary Data Source: Unstructured audio/video recordings and email transcripts.

Core Competency: Reality verification. They validate whether specific keywords (competitors, pricing, objections) were actually spoken, providing a "ground truth" that CRM data often lacks.

Structural Limitations: While effective at analyzing what happened inside a meeting, they are structurally limited to interaction-level context rather than market-level signals. They cannot detect if a buyer is ghosting you because of a bad meeting or a sector-wide spending freeze.

Representative Examples: Gong, ZoomInfo Chorus.

Conversation intelligence platforms surface what was said in meetings, providing critical context for coaching and deal review, without prescribing next actions.

3. Data foundation & activity platforms (the hygiene layer)

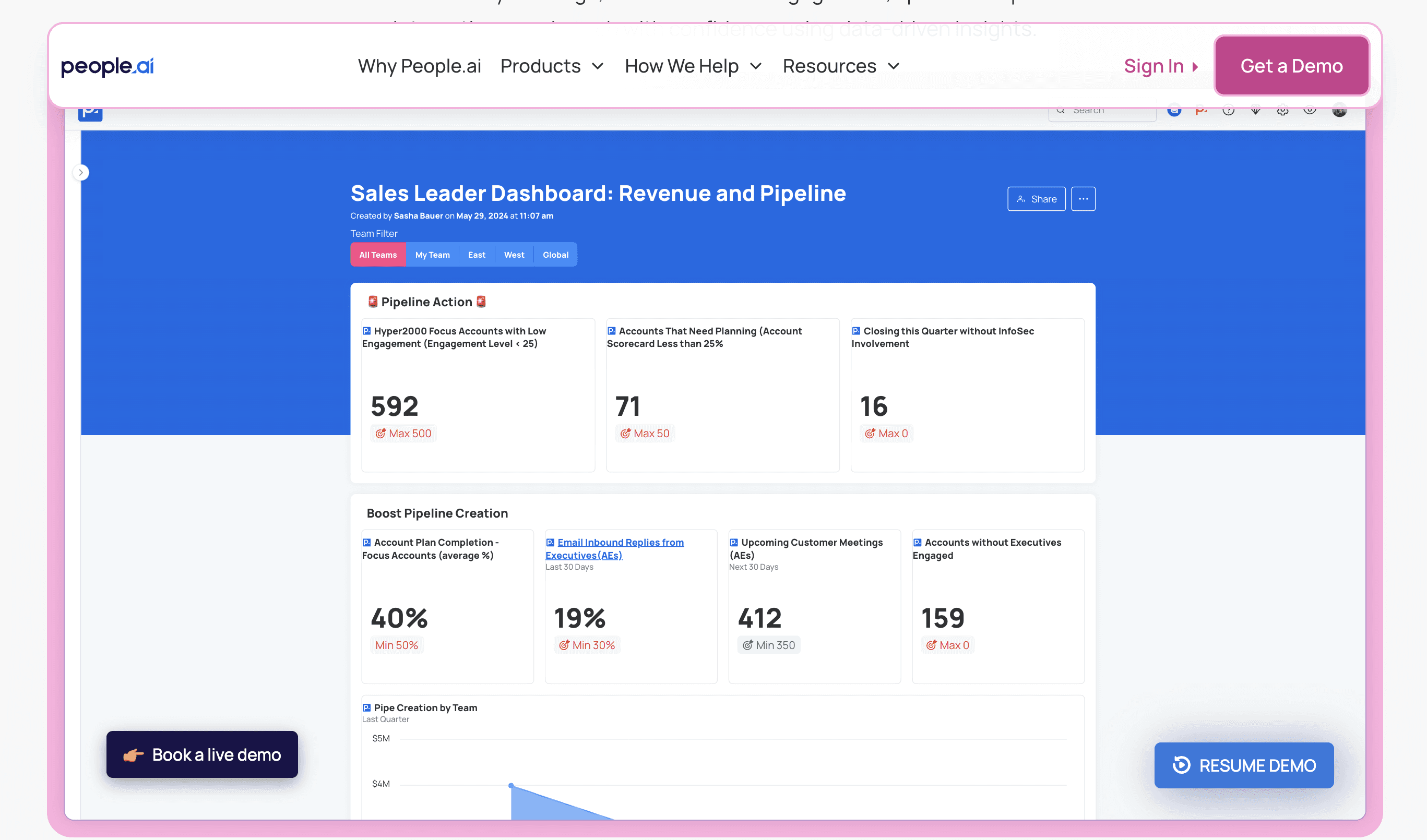

Primary Function: These platforms operate as "plumbing" to solve the data entry crisis. They automatically scrape metadata from email, calendars, and collaboration tools to populate the CRM.

Primary Data Source: Metadata from communication systems (Microsoft 365, Google Workspace).

Core Competency: CRM hygiene and attribution. They ensure activity logs are accurate without requiring rep intervention, which is essential for accurate reporting.

Structural Limitations: They provide the raw material (data) required for decision-making but do not typically contain the logic engines required to recommend strategic pivots. These platforms are a prerequisite for intelligence, but not intelligence itself.

Representative Examples: People.ai, Einstein Activity Capture.

Activity-capture platforms ensure CRM completeness by automatically logging emails, meetings, and interactions, forming the data foundation for downstream analytics.

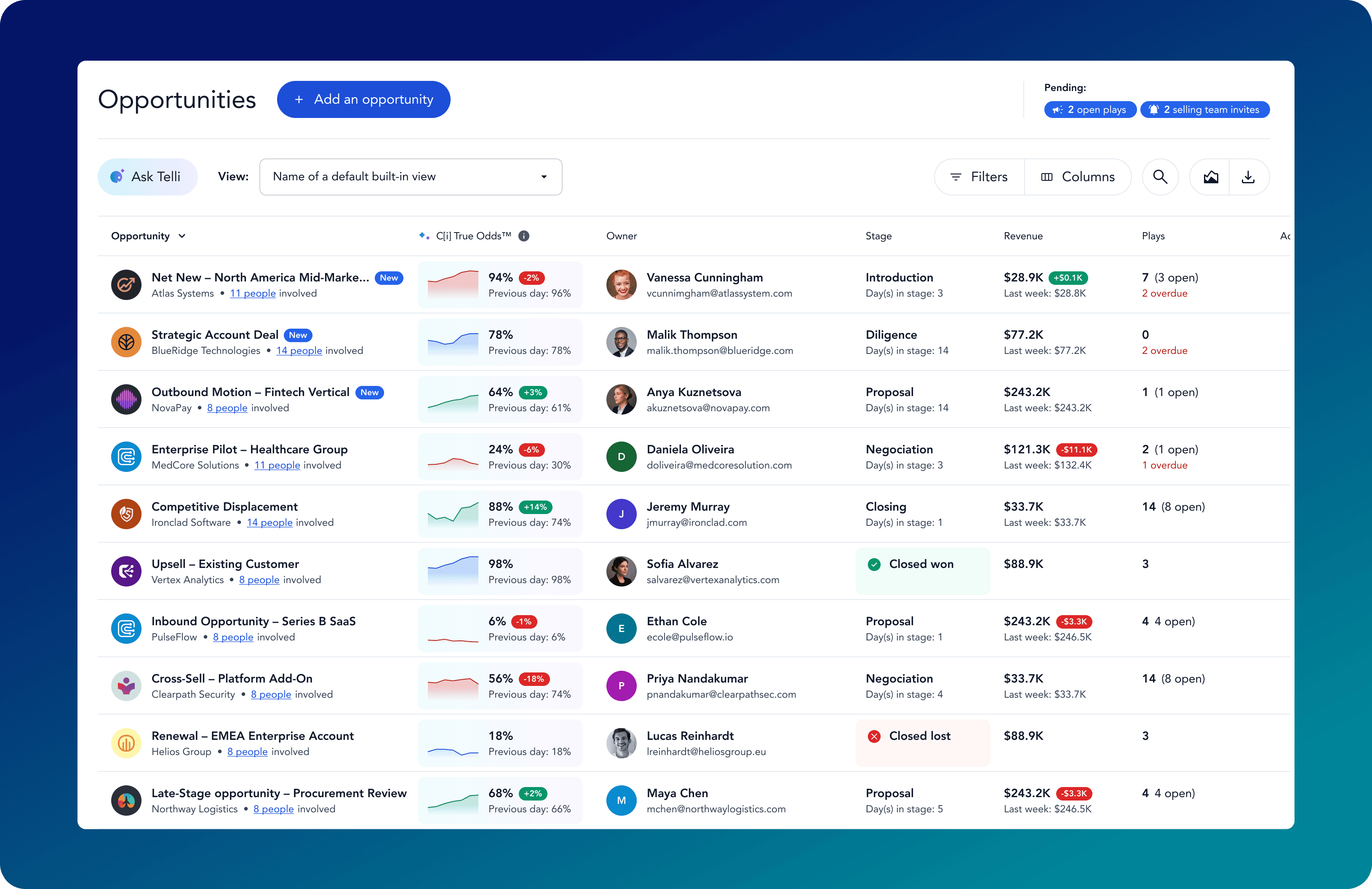

4. Decision & orchestration engines (the action layer)

Primary Function: This emerging category focuses on prescribing actions rather than just reporting on them. Unlike the previous categories, which require humans to interpret data, decision engines use logic and network-level signals to recommend specific interventions.

Primary Data Source: A combination of internal CRM data and external network data (buying signals aggregated across multiple organizations).

Core Competency: Probability and execution. By analyzing patterns beyond a single company's history, these engines can calculate mathematical probabilities ("True Odds") and deploy agents to execute tasks.

Structural Limitations: As a newer architectural layer, these systems require a shift in trust. Leaders must be willing to trust algorithmic recommendations over traditional "gut feel."

Key Distinction: Unlike insight platforms, decision engines are evaluated on their ability to reduce human arbitration, not increase visibility. In mature implementations, recommendations are continuously updated and partially executed without manual intervention.

Representative Examples: Emerging "System of Decision" platforms and Agentic AI solutions.

As revenue teams scale, the performance ceiling of each category becomes visible. The question is no longer which tool provides better visibility, but which architecture can reliably influence outcomes.

Decision engines continuously evaluate deal probability and recommend actions in real time, reducing reliance on manual forecast calls and subjective judgment.

Why visibility-based platforms plateau as revenue complexity increases

Visibility tools perform well in stable environments. When deal cycles are predictable, buyer behavior is consistent, and pipeline risk is mostly internal, dashboards and inspection workflows are sufficient. The problem is that most enterprise GTM teams no longer operate in those conditions.

As revenue complexity increases, more variables fall outside the CRM: budget freezes, buying committees reshuffled mid-cycle, vendors deprioritized for reasons unrelated to performance. In these environments, additional visibility does not translate into better outcomes. It simply increases the volume of signals humans are expected to arbitrate.

This is the structural ceiling of visibility-based platforms.

Forecast-first tools surface more exceptions, not better decisions. Conversation intelligence adds context, but only at the interaction level. Activity platforms improve data completeness, but not prioritization. Each layer increases informational fidelity while leaving the core decision loop manual.

The result is a widening execution gap:\

Leaders know more about what is happening.

Reps receive more alerts, nudges, and dashboards.

Yet interventions still happen late, inconsistently, or based on intuition.

At scale, this model breaks down. Human managers become the bottleneck, forced to reconcile conflicting signals across tools, regions, and accounts. Decision quality becomes uneven, dependent on individual experience rather than systemized logic. Teams react faster than before, but not early enough to change outcomes reliably.

This is why mature revenue organizations eventually stop asking “How do we get better visibility?” and start asking a different question:

Where does decision leverage actually sit, and how do we systematize it?

That shift, from inspection to intervention, is what separates platforms that inform from platforms that influence results.

How modern revenue teams choose the right platform

At this stage, the question is no longer “Which tool has more features?”

It becomes “Where does our decision leverage actually sit?”

In 2026, high-performing revenue teams select platforms based on the type of outcome they need to systematize, not on brand category labels. The table below reflects how platforms are typically chosen in practice.

Decision-Oriented Evaluation Matrix

Your Primary Goal | Technology Category | Data Scope Used | Decision Responsibility |

|---|---|---|---|

Enforce forecast discipline and reporting cadence | Forecast-First Platforms | Internal CRM history (single-tenant) | Human managers interpret gaps |

Improve rep coaching and call quality | Conversation Intelligence | Interaction-level context (calls, emails) | Managers and enablement teams |

Fix CRM hygiene and activity completeness | Data Foundation Platforms | Communication metadata (email, calendar) | Automated capture, manual prioritization |

Reduce late-stage risk and intervene earlier | Decision & Orchestration Engines | Internal + network-level signals | System recommends and triggers actions |

Scale consistent prioritization across regions | Decision & Orchestration Engines | Cross-company behavioral patterns | Algorithmic logic over intuition |

Minimize manual forecast calls and inspections | Decision & Orchestration Engines | Probabilistic models, continuous signals | Automated arbitration |

How to Read This Table

Visibility tools optimize awareness. They surface risk but depend on humans to resolve it.

Foundation tools optimize data quality. They improve inputs but do not arbitrate outcomes.

Decision engines optimize intervention. They exist to reduce human arbitration, not increase reporting.

This distinction explains why many organizations operate multiple platforms simultaneously yet still struggle with late interventions. They have invested heavily in seeing the problem, but lightly in deciding what to do about it.

For teams operating at scale, the long-term differentiator is not how clearly risk is visualized, but how early and consistently it is acted upon.

FAQ

Other resources

Is cold outreach dead? Why network introductions are the future of B2B

Feb 9, 2026

Learning

Is cold outreach dead? Why network introductions are the future of B2B

Autonomous CRM: why manual data entry breaks forecasts

Feb 8, 2026

Learning

Autonomous CRM: why manual data entry breaks forecasts

How modern revenue teams evaluate sales AI in 2026

Feb 7, 2026

Learning

How modern revenue teams evaluate sales AI in 2026

Sales forecasting tools vs analytics platforms vs decision engines

Feb 6, 2026

Learning

Sales forecasting tools vs analytics platforms vs decision engines

What is a revenue operating system?

Feb 6, 2026

Learning